The Human Element: Teaching Machines to Feel While We Question Their Place

Today’s AI headlines suggest a shift in focus from raw computing power to the nuances of human experience and the physical realities of hardware. From companies hiring actors to teach models how to emote, to a new wave of skepticism regarding “AI wrappers,” the industry seems to be entering a more reflective—and perhaps more scrutinized—chapter of its development.

The most fascinating, if slightly unsettling, development today involves the quest to make AI sound more like us. Handshake AI is currently recruiting improv actors to help train frontier models on the subtleties of human emotion and tone. The goal is to move past the mechanical, “helpful assistant” persona and into a territory where a model can shift its emotional state based on context. While this promises more natural interactions, the darker side of synthetic realism is already manifesting. Researchers are warning that deepfake influencers are now being used to peddle health supplements to unsuspecting social media users, effectively blurring the line between marketing and manipulation. When an AI can convincingly mirror human concern or enthusiasm, our traditional skepticism for digital content may not be enough to protect us.

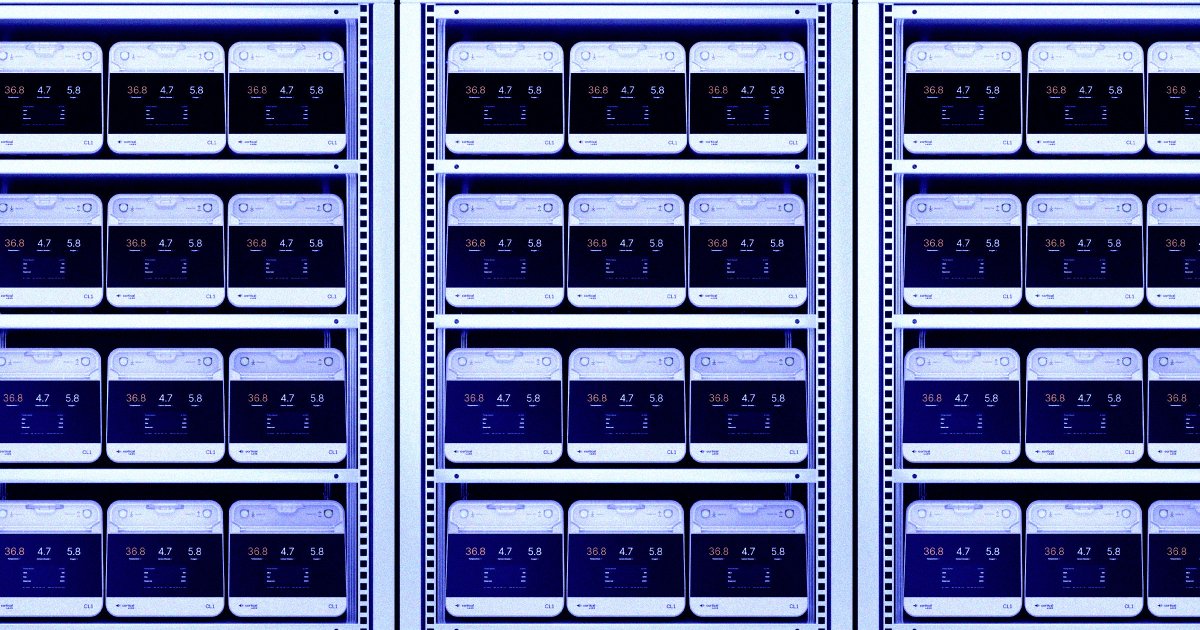

As the software gets more “human,” the hardware is becoming more biological. In a move that feels like science fiction, Cortical Labs is working on biological data centers that utilize actual human brain cells to process information. This “biocomputing” approach aims to solve the massive energy consumption issues currently plaguing traditional silicon-based AI. On the more traditional hardware front, independent developers are finding ways to squeeze more life out of existing systems. A new open-source driver called GreenBoost is attempting to augment NVIDIA GPU memory using system RAM and NVMe storage, a move that could democratize the ability to run larger Large Language Models (LLMs) on hardware that was previously considered underpowered.

The industry itself is also facing a reality check regarding value and public perception. Google and Accel recently announced their latest startup cohort in India, and notably, none of the chosen companies were “AI wrappers”—startups that simply put a thin interface over existing models like GPT-4. This signals a growing weariness among investors toward superficial AI products. Meanwhile, venture capitalists at the Game Developers Conference expressed confusion and sadness over the intense pushback from gamers regarding AI integration in titles. This cultural friction suggests that while the tech world is all-in on AI, the actual users are increasingly wary of its impact on creativity and job security.

Even the tech giants are beginning to refine their approach to how AI interacts with our daily lives. Microsoft has reportedly scaled back its plans to embed Copilot into every corner of the Windows 11 interface, including notifications and settings, in an effort to reduce “AI bloat.” At the same time, Google is making AI history more accessible within its Android app, moving away from experimental “labs” branding toward a more integrated, permanent feature set.

Today’s stories show that we are moving past the initial shock of what AI can do and into the difficult work of deciding what it should do. Whether it’s reinventing the data center with biological cells or pulling back on intrusive OS features, the industry is finally acknowledging that AI cannot exist in a vacuum—it must eventually answer to the hardware limits of our planet and the social expectations of its people.